It even can survive short outages of the ES itself I think (should check that).It will run in its own pace, so even if process goes down, the messages produced by this process will be written to the ES after all.

FILEBEATS VS LOGSTASH CODE

In addition you won’t be able to write “in batch” into the elasticsearch without the custom code and instead will have to create an “insert” per log message that might be wasty. When you log in the advice – you will “eat” the resources of your JVM – memory, CPU to maintain ES connections pool, thread pool for doing an actual log (otherwise the business flow might slow down because of logging the requests to ES). I think you better off with filebeat / logstash or even both to write to ES, here is why: If I compare the first and the second option that you’ve presented, This approach solves issues that might appear in the “first” option that you’ve presented, while some other issues will be left unsolved. Technically both ways can be implemented, although for the first path I would suggest a different approach, at least I did something similar ~ 5 years ago in one of my projects:Ĭreate a custom log appender that throws everything into some queue (for async processing) and from that took an Apache Flume project that can write stuff to the DB of your choice in a transaction manner with batch support, “all-or-nothing” semantics, etc. I also assume that you have quite a log of logs to manage, otherwise, if you’re planning to log like a couple of messages in a hour, then it doesn’t really matter which way you go – both will do the job. Log every request and response into a log file and using filebeat and/or logstash to send them to ElasticSearch.įirst off, I assume, that you have a distributed application, otherwise just write your stuff in a log file and that’s it Using Spring Boot ResponseBodyAdvice to log every request and response that is sent to the client directly to ElasticSearch. Which of the following two methods has better performance?

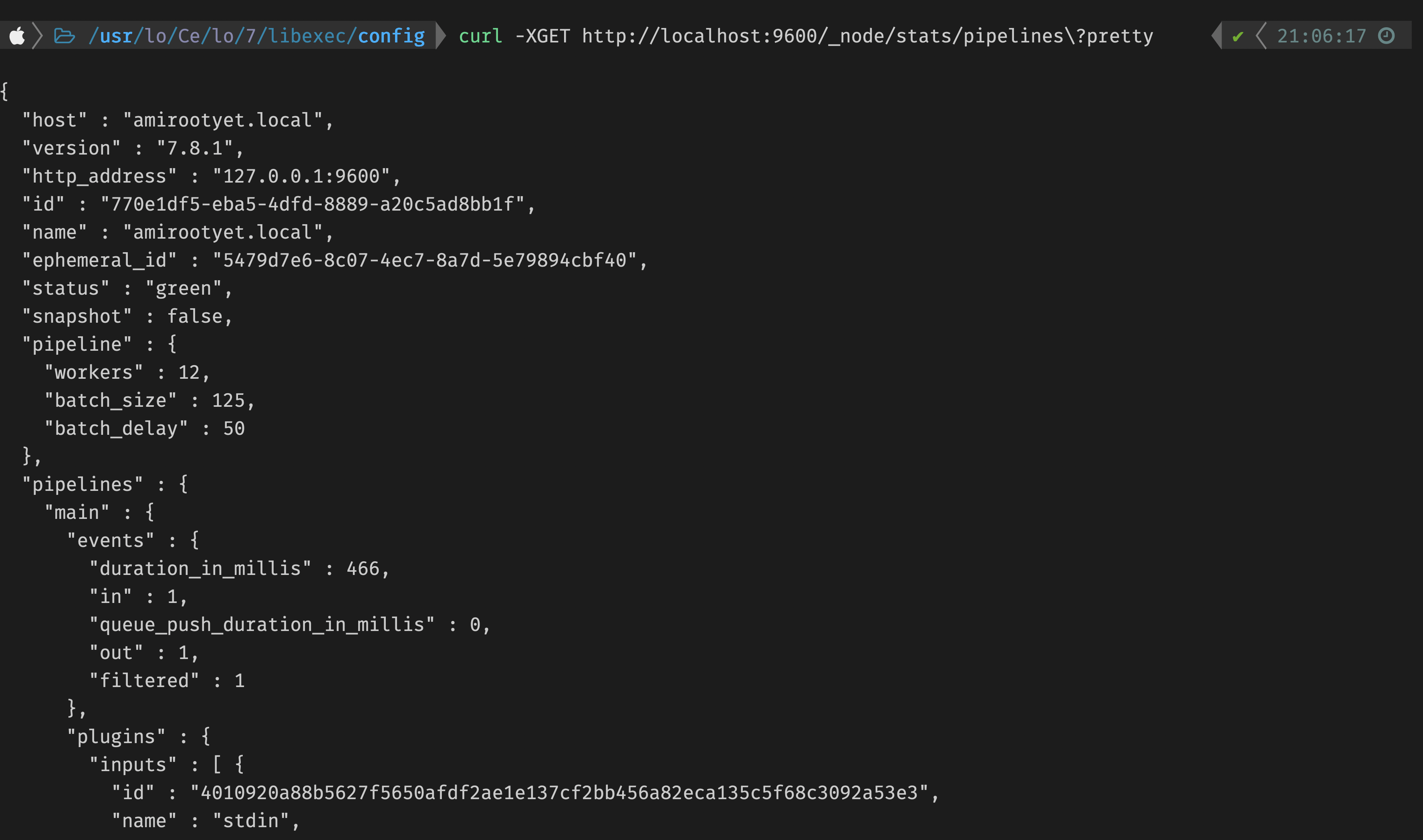

" message" => " 83.149.9.216 - \" GET /presentations/logstash-monitorama-2013/images/kibana-search.png HTTP/1.I’m using a Spring Boot back-end to provide some restful API and need to log all of my request-response logs into ElasticSearch. " source" => " /var/log/dummy/logstash-tutorial.log", " request" => " /presentations/logstash-monitorama-2013/images/kibana-search.png", 再度Filebeatを起動すると、最初からロードされます $ sudo /etc/init.d/filebeat start Starting filebeat ( via systemctl ): rvice.

0 kommentar(er)

0 kommentar(er)